Retroformer: Retrospective Large Language Agents with Policy Gradient Optimization

Abstract

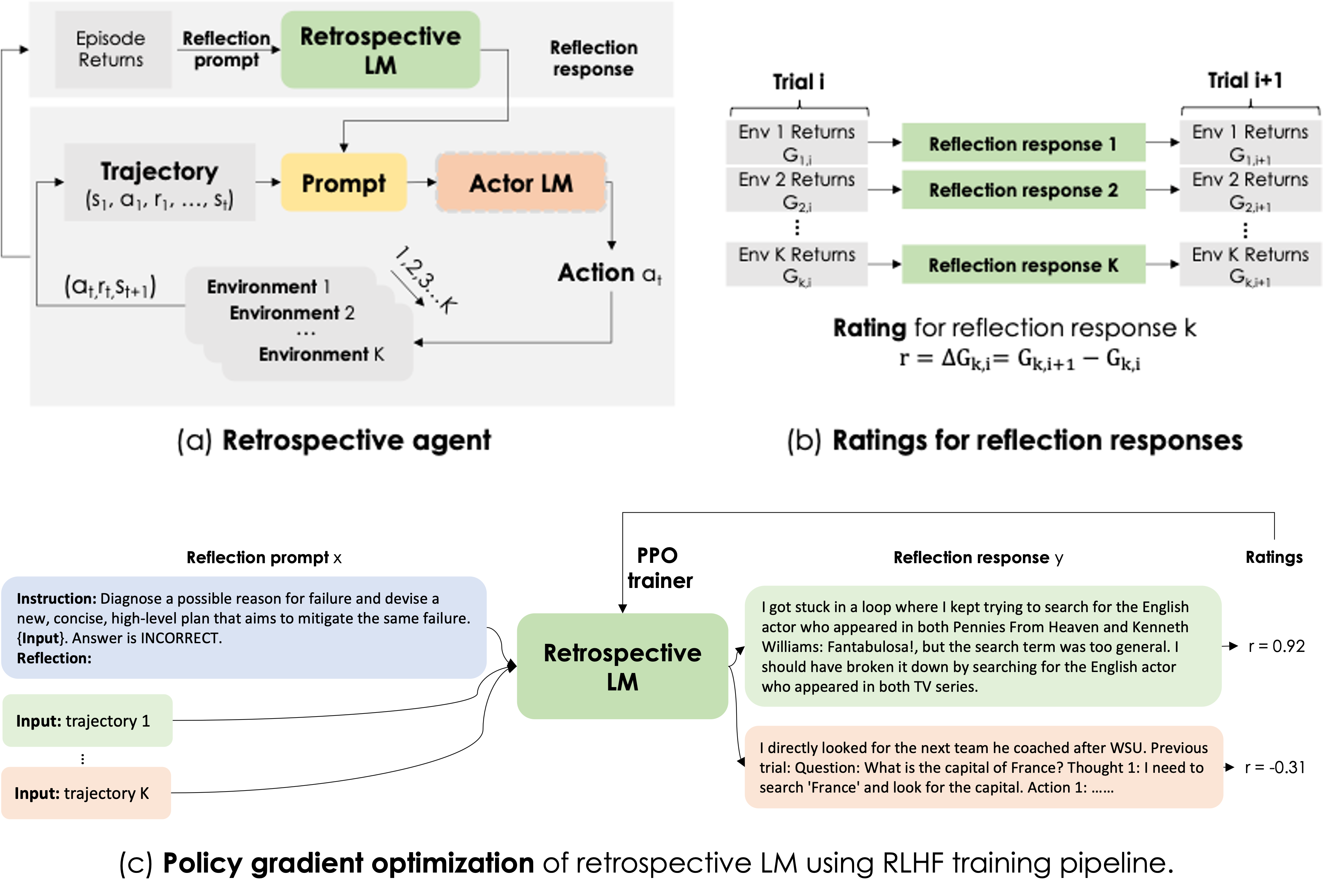

Recent months have seen the emergence of a powerful new trend in which large language models (LLMs) are augmented to become autonomous language agents capable of performing objective oriented multi-step tasks on their own, rather than merely responding to queries from human users. Most existing language agents, however, are not optimized using environment-specific rewards. Although some agents enable iterative refinement through verbal feedback, they do not reason and plan in ways that are compatible with gradient-based learning from rewards. This paper introduces a principled framework for reinforcing large language agents by learning a retrospective model, which automatically tunes the language agent prompts from environment feedback through policy gradient. Specifically, our proposed agent architecture learns from rewards across multiple environments and tasks, for fine-tuning a pre-trained language model which refines the language agent prompt by summarizing the root cause of prior failed attempts and proposing action plans. Experimental results on various tasks demonstrate that the language agents improve over time and that our approach considerably outperforms baselines that do not properly leverage gradients from the environment.

Framework

BibTeX

@inproceedings{

yao2024retroformer,

title={Retroformer: Retrospective Large Language Agents with Policy Gradient Optimization},

author={Weiran Yao and Shelby Heinecke and Juan Carlos Niebles and Zhiwei Liu and Yihao Feng and Le Xue and Rithesh R N and Zeyuan Chen and Jianguo Zhang and Devansh Arpit and Ran Xu and Phil L Mui and Huan Wang and Caiming Xiong and Silvio Savarese},

booktitle={The Twelfth International Conference on Learning Representations},

year={2024},

url={https://openreview.net/forum?id=KOZu91CzbK}

}