Abstract

Evaluating the output of Large Language Models (LLMs) is one of the most critical aspects of building a performant compound AI system. Since the output from LLMs propagate to downstream steps, identifying LLM errors is crucial to system performance. A common task for LLMs in AI systems is tool use. While there are several benchmark environments for evaluating LLMs on this task, they typically only give a success rate without any explanation of the failure cases. To solve this problem, we introduce SpecTool, a new benchmark to identify error patterns in LLM output on tool-use tasks. Our benchmark data set comprises of queries from diverse environments that can be used to test for the presence of seven newly characterized error patterns. Using SPECTOOL , we show that even the most prominent LLMs exhibit these error patterns in their outputs. Researchers can use the analysis and insights from SPECTOOL to guide their error mitigation strategies.

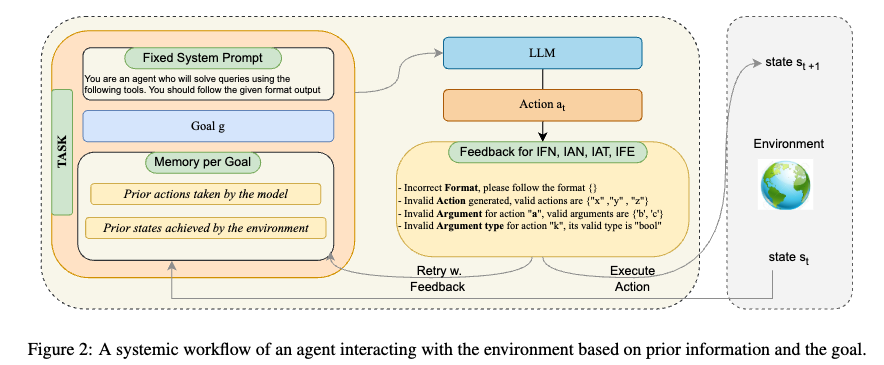

Framework

BibTeX

@article{kokane2024spectool,

title={SpecTool: A Benchmark for Characterizing Errors in Tool-Use LLMs},

author={Kokane, Shirley and Zhu, Ming and Awalgaonkar, Tulika and Zhang, Jianguo and Hoang, Thai and Prabhakar, Akshara and Liu, Zuxin and Lan, Tian and Yang, Liangwei and Tan, Juntao and others},

journal={arXiv preprint arXiv:2411.13547},

year={2024}

}